Must-share information (formatted with Markdown):

- which versions are you using (SonarQube, Scanner, Plugin, and any relevant extension)

- SonarQube - Community Edition - Version 9.3 (build 51899)

- “@casualbot/jest-sonar-reporter”: “^2.2.5”

- “sonarqube-scanner”: “^2.8.1”

Tried so far:

In the documentation, there is a section for uploading test execution data using the Generic Test Execution Report Format. I have a file which the scanner generated that looks like this (Some information redacted with …):

<testExecutions version="1">

<file path="src/__test__/redux/reducer.test.js">

<testCase name="STEP returns the initial state of step" duration="3"/>

<testCase name="STEP returns the current state of step" duration="0"/>

<testCase name="STEP returns the updated state of step" duration="0"/>

<testCase name="FETCH_VIEW_SETTINGS returns the initial state" duration="0"/>

<testCase name="FETCH_VIEW_SETTINGS returns the current state" duration="1"/>

<testCase name="FETCH_COMPANY_DETAILS returns the initial state" duration="0"/>

<testCase name="FETCH_COMPANY_DETAILS returns the current state" duration="1"/>

<testCase name="FETCH_SCHEDULING_POLICY returns the initial state" duration="0"/>

<testCase name="FETCH_SCHEDULING_POLICY returns the current state" duration="1"/>

...

...

<testCase name="FETCH_CLASS_INVENTORY returns the existing state" duration="3">

<failure message="Error: expect(received).toBe(expected) // Object.is equality"><![CDATA[Error: expect(received).toBe(expected) // Object.is equality

Expected: 2

Received: 3

at Object.<anonymous>

<REDACTED>

</failure>

</testCase>

...

In the CI logs, I can see the file with the test execution data is being parsed:

INFO: Sensor Generic Test Executions Report

INFO: Parsing /home/runner/work/proj/proj/executions/test-report.xml

INFO: Imported test execution data for 121 files

INFO: Sensor Generic Test Executions Report (done) | time=85ms

The report is generated thanks to the updated fork of jest-sonar-reporter, @casualbot/jest-sonar-reporter, and it’s configured in package.json like so:

"reporters": [

"default",

[

"jest-html-reporters",

{

"publicPath": "./jest-client-side-report",

"pageTitle": "Client Side Unit Tests",

"filename": "index.html"

}

],

[

"@casualbot/jest-sonar-reporter",

{

"relativePaths": true,

"outputName": "test-report.xml",

"outputDirectory": "executions"

}

]

],

From what I can tell, I have all of the pieces necessary in place to include test execution data, but I’m lacking information about what exactly this means. I don’t see anything in the SonarQube Community Edition dashboard related to test executions. So here are my questions:

Trying to achieve

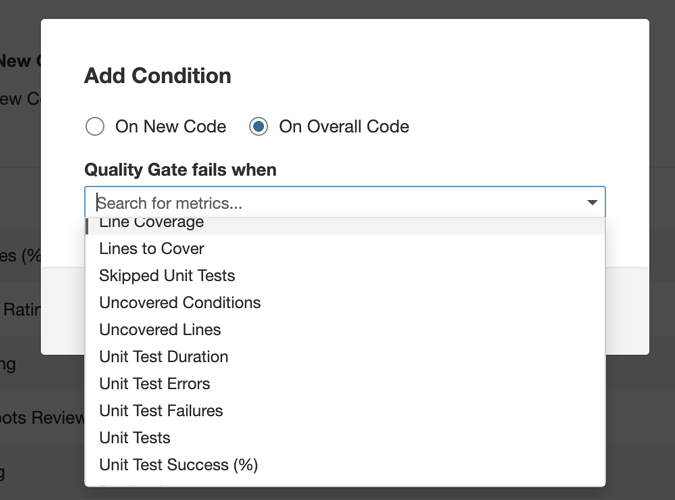

I want to analyze the test results and either pass or fail the SonarQube Quality Gate depending on what percentage of the unit tests pass. If 90% pass, then we pass the quality gate and deploy the build. If less than 90% pass, then we fail the quality gate.

Does SonarQube support this? What exactly does SonarQube do with the test execution data? Where is it in the dashboard?

Let me clarify that I am not referring to test coverage. We have this in place and require 80% test coverage, but I also want to focus on the percentage of tests that pass. My understanding is that I can have 80% of the code covered by tests, but when executed, 50% of the tests pass. From my understanding, code coverage and the test results are two completely separate metrics, and I’d like to get official clarity on that if possible.

Thank you!

James